At Cloudsmith, we explore the entire Kubernetes 1.36 release notes in-depth in advance of each scheduled release. This post aims to highlight the newly introduced Alpha-stage Kubernetes Enhancement Proposals (KEPs) in the upcoming release. These Alpha improvements are early-stage, disabled by default, and not necessarily recommended for production environments, but they certainly give a strong sense of Kubernetes' evolving direction and future capabilities. As always, we’ll narrow it down to the top 10 most interesting new features in version 1.36 of Kubernetes.

DRA advancements in v.1.36

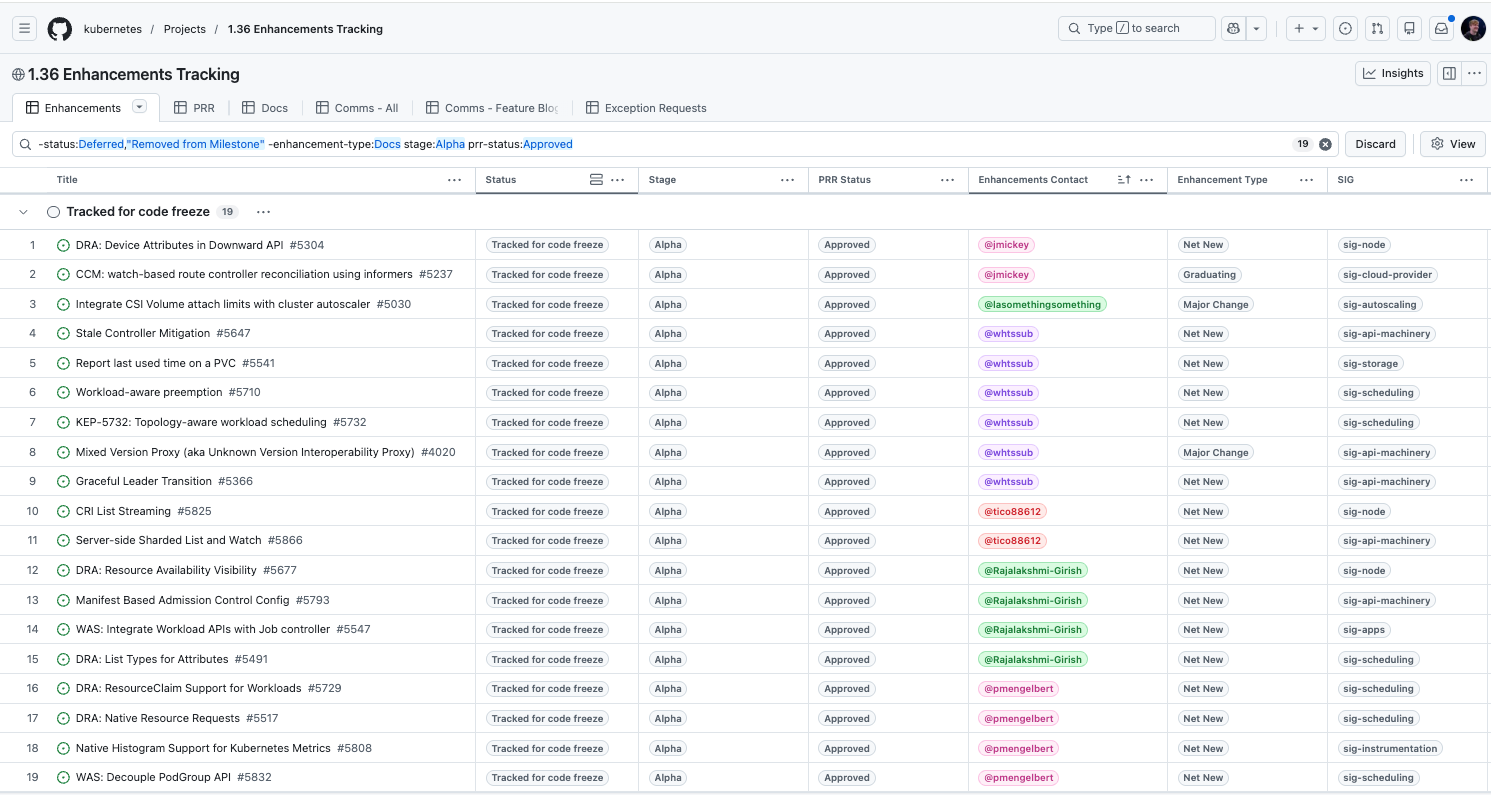

As we’ve seen in the past few versions of Kubernetes, we are getting more and more new features related to Dynamic Resource Allocation (DRA). In order to make Kubernetes the platform of choice for orchestrating AI-native workloads, 5 new DRA enhancements have been included in version 1.36.

- Device Attributes in Downward API (KEP #5304)

- Resource Availability Visibility (KEP #5677)

- List Types for Attributes (KEP #5491)

- ResourceClaim Support for Workloads (KEP #5729)

- Native Resource Requests (KEP #5517)

KEP 5304 allows Pods to actually see the hardware they are using. By exposing device identifiers from the DRA driver into the Downward API, workloads (especially those in KubeVirt) can now discover specific device attributes as environment variables or files, eliminating the guesswork of which physical hardware has been assigned to them.

KEP 5677 introduces a way for platform engineers to view real-time capacity. By bridging the gap between hardware definitions in ResourceSlices and consumption in ResourceClaims, it provides a clear view of what resources remain available across nodes or pools.

KEP 5491 supports list-typed attributes so users can handle complex hardware like GPUs or FPGAs. Instead of being limited to simple key-value pairs, the API can now describe complex topologies (such as a single device being linked to multiple NUMA nodes or PCIe roots) and allows users to write selection constraints against these lists.

KEP 5729 brings group awareness to resource management. By allowing ResourceClaims to be associated with PodGroups rather than individual Pods, Kubernetes can reserve a pool of resources for a collective task. This simplifies sharing hardware across multiple Pods in a single workload without needing to know specific resource names in advance.

KEP 5517 solves a weird problem where core resources (like CPU) are managed by both the standard scheduler and a DRA driver. It introduces a unified accounting model that ensures the scheduler doesn't oversubscribe a node when core resources are being dynamically allocated via dra-driver-cpu.

Stale controller detection and mitigation

Enhancement #5647

This proposal introduces a mechanism to mitigate staleness in Kubernetes controllers, specifically those within the kube-controller-manager (KCM). Because controllers rely on a local cache populated by an eventually consistent watch stream, they often make decisions based on outdated information, leading to spurious reconciles or incorrect state transitions. By exposing resource versions through the informer and adding a BookmarkFunc to ResourceEventHandlerFuncs, controllers can now track whether their own recent writes have been propagated back to their local cache, ensuring they don’t operate on a ghost state.

The mitigation strategy is implemented as an opt-in read-after-write guarantee at critical decision points. Controllers can track the resourceVersion of objects they modify and pause further reconciliation for those specific objects until the cache catches up. For example, a DaemonSet controller can skip and requeue its work loop if it detects that the Pod updates it just sent to the API server aren't yet visible in its local view. This prevents the controller from fighting with itself and reduces unnecessary API pressure while maintaining exponential backoff semantics for safety.

PersistentVolumeClaim last used time

Enhancement #5541

Kubernetes 1.36 introduces a mechanism to track and report the last time a PersistentVolumeClaim (PVC) was actively used by a Pod, surfacing this information directly in the pvc.Status. By adding a new condition (such as unusedSince) or a timestamp to the PVC object, Kubernetes can now accurately reflect when a volume was last mounted or accessed by a workload. This addresses a long-standing visibility gap where administrators struggled to identify orphaned or abandoned storage that was still consuming resources and incurring costs despite no longer being attached to any active processes.

For Kubernetes users in v1.36, this feature is a significant win for cost optimisation and cluster hygiene. In large-scale environments, PVCs often persist long after their associated Deployments or StatefulSets have been deleted, leading to massive storage sprawl. With the Last Used Time now visible via standard API calls, platform engineers can automate the cleanup of unused volumes with high confidence, ensuring they only delete data that is truly inactive. This native tracking eliminates the need for complex, custom sidecars or external monitoring tools to guess at volume utilisation.

Workload-aware preemption

Enhancement #5710

The proposed workload-aware preemption enhancement in 1.36 marks a shift from pod-centric to workload-centric scheduling. Building on previous gang scheduling foundations (such as KEP-4671), this update addresses a common flaw in high-performance computing and AI in that there is often partial preemption. In traditional Kubernetes, the scheduler might preempt only a few pods from a complex job (like an MPI or AI training task). Because these workloads are tightly coupled, losing even one pod renders the entire group useless, leading to significant resource waste as the remaining pods sit idle.

The new API allows developers to define a preemption unit, ensuring that the scheduler treats a group of pods as a single entity during resource contention. Key features include the introduction of delayed preemption, whereby it prevents killing existing workloads until the scheduler is certain the new, higher-priority workload can actually be fully placed. By standardising these all-or-nothing preemption semantics within the core kube-scheduler, Kubernetes 1.36 clearly provides a more robust framework for managing expensive hardware like GPUs, ensuring that disruptions only occur when they will result in actual progress for higher-priority tasks.

Topology-aware workload scheduling

Enhancement #5732

Kubernetes 1.36 successfully introduces a Topology-aware and a DRA-aware scheduling algorithm for the Kubernetes kube-scheduler, specifically designed for high-performance distributed workloads like AI/ML training. While previous updates established how to group pods, this enhancement focuses on where those groups are physically placed. It allows the scheduler to treat a PodGroup as a single unit that must fit within specific Placements, the various subsets of the cluster such as a single server rack or a specific set of interconnected hardware. By evaluating these placements holistically rather than pod-by-pod, the scheduler can ensure low-latency communication and prevent fragmentation, where a workload is spread across too many network domains to function efficiently.

The design extends the Workload API to include TopologyConstraints (for node-label co-location) and DRAConstraints (for shared DRA). Strategically, this move brings logic previously found in external tools like Volcano or Kueue directly into the core Kubernetes scheduler. This integration allows the scheduler to use its high-fidelity filtering and scoring plugins to simulate the placement of an entire workload before committing resources, ensuring that complex hardware requirements and network topologies are respected without the operational overhead of third-party controllers.

Graceful leader transition

Enhancement #5366

This particular Alpha feature seeks to address a long-standing inefficiency in how core Kubernetes components (like the kube-controller-manager and kube-scheduler) handle High Availability. Currently, when a leader loses its lock, whether due to a network blip or a planned update, the entire process is forced to crash and restart to reset its state. This panic-on-loss approach is heavy on overhead, prevents clean resource cleanup, and relies on the Kubelet to manually restart the container, which adds unnecessary latency to failovers.

The proposal introduces a three-phase plan to decouple leadership loss from process termination. By refactoring these components to track goroutines more strictly and handle context cancellation gracefully, the system can simply transition a leader back into a follower state. This allows the process to stay alive, keep its caches warm, and immediately compete for the lock again if needed. This change not only reduces the computational cost of leadership churn but also paves the way for faster, more reliable recovery in large-scale clusters.

CRI list streaming

Enhancement #5825

This KEP introduces server-side streaming RPCs to the Container Runtime Interface (CRI) to resolve a critical scalability bottleneck where the Kubernetes node agent (kubelet) fails to list containers on high-churn nodes. Currently, List* operations use a single unary response that is subject to a 16 MB gRPC message limit. Once a node exceeds roughly 10,000 containers, the response size triggers a total RPC failure. By introducing new streaming endpoints like StreamContainers, the runtime can send results one item at a time, effectively bypassing the message size limit without requiring a change to the underlying gRPC configuration or container garbage collection logic.

While this architectural shift eliminates the hard crashes caused by large message sizes, it’s worth noting that it’s a targeted fix for transport limits rather than resource consumption. Because the kubelet still collects the full stream into a single list before processing, it does not reduce the total memory pressure on the node. Instead, it ensures that environments with high pod churn (such as CI/CD runners or nodes managing thousands of CronJobs) remain stable and observable even when container counts spike.

Manifest-based admission control config

Enhancement #5793

I’m most excited to see the addition of file-based Admission Control, a mechanism that allows platform engineers to configure admission webhooks and policies via static manifest files rather than through the Kubernetes API. By loading these policies directly into the kube-apiserver at startup, it closes an existing limitation in bootstrapping whereby a cluster might briefly process requests before API-based policies are active. These static policies exist in an isolated zone of sorts, since they cannot be modified or deleted via kubectl or API calls, making them immune to accidental or malicious tampering by users with high-level cluster privileges.

A primary benefit of this approach is self-protection. Since these file-based policies are active before the API server starts and aren't stored in etcd, they can be used to protect the API-based admission resources themselves (like ValidatingAdmissionPolicy). This prevents circular dependencies and ensures that critical security guardrails cannot be bypassed even if etcd is compromised or unavailable. While the API server can watch these files for dynamic updates at runtime, the policies remain strictly static from the perspective of the Kubernetes API, using a reserved .static.k8s.io naming suffix to maintain a clear separation of concerns.

Server-side sharded list and watch

Enhancement #5866

This Net New Alpha feature seeks to extend server-side sharding for LIST and WATCH requests in the Kubernetes API Server, a major scalability improvement for horizontally scaled controllers. Historically, sharded controllers like kube-state-metrics performed client-side filtering, meaning every replica received and processed the entire stream of cluster events only to discard irrelevant data. This full stream penalty resulted in massive network overhead and wasted CPU. By moving the filtering logic to the API Server via a new shardSelector parameter, each controller replica now only receives the specific slice of data it is responsible for, enabling true linear scaling and significantly reducing resource consumption across the cluster.

The implementation utilises a consistent hashing strategy defined by the client using a CEL-based syntax, such as shardRange(object.metadata.uid, start, end). By partitioning the keyspace (initially focusing on UIDs and Namespaces), the API Server can compute the hash of an object and dispatch it only to the relevant watcher. This architectural shift provides the fundamental building blocks for large-scale Kubernetes environments to manage high-churn resources, like Pods, without overwhelming the network or the controllers themselves.

Native histogram support for Kubernetes metrics

Enhancement #5808

Last but not least, this proposed addition of a dedicated CLI flag --enable-feature=native-histograms in Kubernetes 1.36 allows platform teams to transition from classic to native histograms. While native histograms offer significantly higher resolution and efficiency, enabling them is technically a breaking change for existing observability stacks. Because Prometheus prioritises native histograms by default when the feature is enabled, a simple Kubernetes upgrade could cause control-plane components to stop emitting classic histograms, instantly breaking any dashboards or alerts that rely on the legacy format.

The benefit of this net new enhancement is controlled opt-in. Even after the feature gate reaches Beta (possibly on by default), the CLI flag will allow platform engineers to explicitly manage the migration at their own pace. This prevents the resource-heavy burden of scraping both formats simultaneously (which I’d say is prohibitive in large-scale clusters) and avoids forcing users into complex scraper configurations to drop native metrics. Essentially, it decouples the Kubernetes feature availability from the actual data migration, ensuring that upstream rules and custom alerts don't fail unexpectedly.

To provide a bit more context, this was the existing migration path advertised by the Prometheus project:

https://grafana.com/docs/mimir/next/send/native-histograms/#migrate-from-classic-histograms

Wrapping up

Kubernetes 1.36 is definitely introducing a strong wave of innovation at the Alpha level, with significant progress across AI/ML workload orchestration (5 new DRA advancements, workload-aware preemption, and topology-aware workload scheduling), kernel-level self protection through manifest-based admission control policies enforced at boot time, as a native histogram flag to support existing classic histograms and the native histograms - preventing any failures when using both.

While there’s a good chance you won’t be testing these experimental features when Kubernetes 1.36 is finally released at the end of the month, these Alpha features are still worth tracking as they mature. Each new feature gives you a better understanding of the maturity of the Kubernetes platform, prioritizing Quality of Life (QoL) improvements as well as new features specifically around AI to ensure Kubernetes is the platform of choice for running LLM-based workloads. As always, if you have questions about the upcoming release beyond just Alpha updates, there’s an open thread on Reddit for the 1.36 release.