This article is based on the recent Weave Intelligence research report, The four levels of agentic software development in the enterprise.

Something has shifted in the last eighteen months that I don't think has been named clearly enough yet. The conversation in enterprise software teams has moved from "should we use AI?" to "why aren't we seeing the gains everyone else is talking about?" The answer, almost every time, is the same. Procuring fancy models isn't the driver of productivity. What actually changes the equation is how well you structure your platform to make productive use of agents at scale.

The organizations pulling ahead are not the ones with access to the best models. They are the ones who have restructured how software gets built. They have evolved their production systems to let agents do real work, not just assist humans at the keyboard. It's hard to describe the difference between "using AI for autocomplete and a little research" and "orchestrating agents" to somebody who hasn't really felt it. But the difference in productivity is simply sparkling.

The bottleneck is not model capability. Models are converging toward commodities. What separates organizations that see 30% throughput gains from those still waiting is the production system in which those models operate. An agent that cannot access your codebase reliably, runs under human credentials, has no sandboxed environment, and feeds into a validation process designed for one human-reviewed PR at a time cannot do much. The model is not the constraint. The platform is.

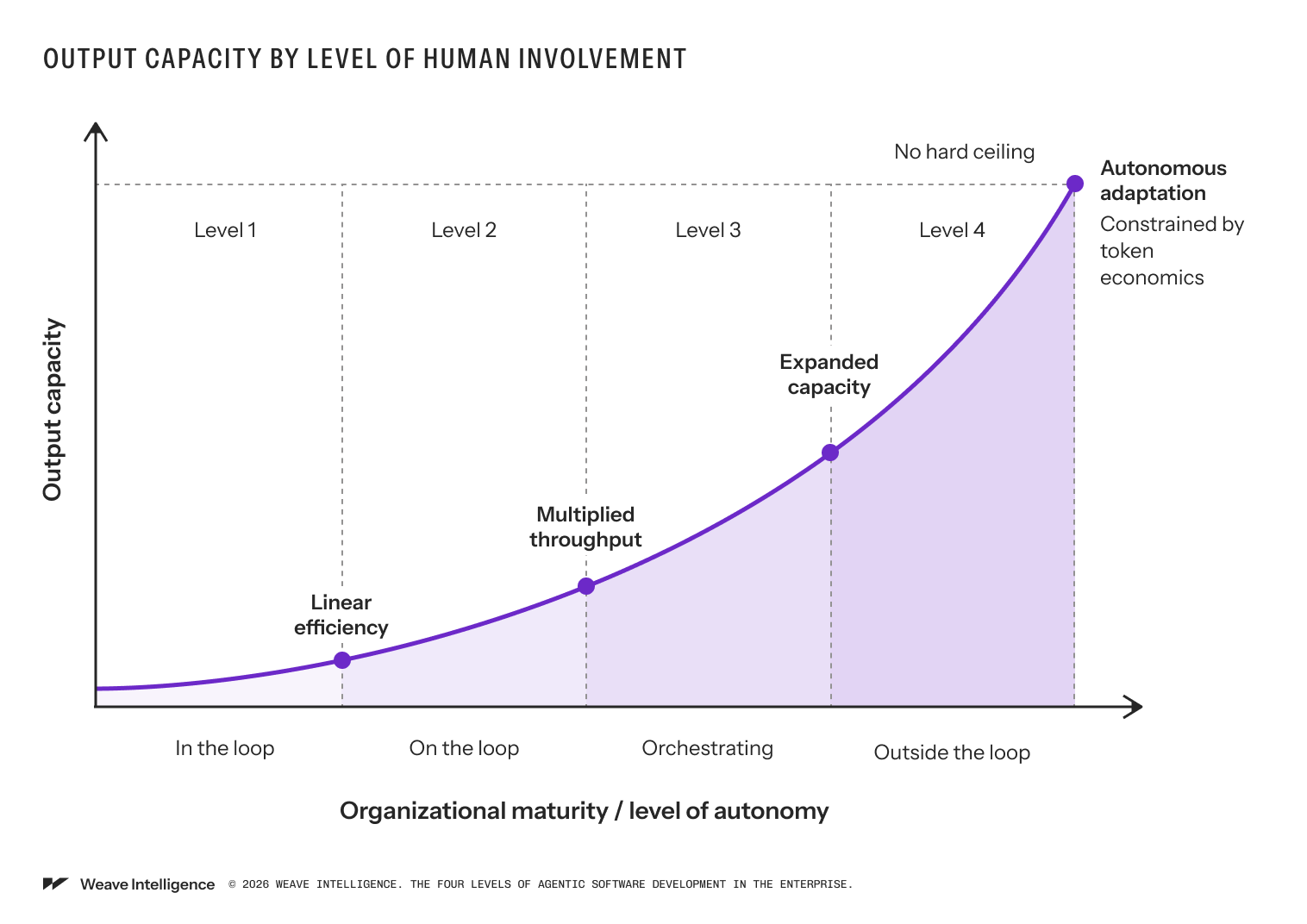

Over the past two years, through our research at Weave Intelligence and our advisory work with enterprise software teams, we have seen a consistent pattern emerge. There are four distinct levels of agentic development, each defined not by which AI tools you are using but by how much of the work humans still need to initiate and approve. As you move through them, the human role shifts from execution engine to reviewer to orchestrator to system designer. Understanding where your organization sits is the most important first step.

The four levels

The levels are defined by one thing: how much of the work humans still need to initiate and approve. As you move through them, the human role shifts from execution engine to reviewer to orchestrator to system designer.

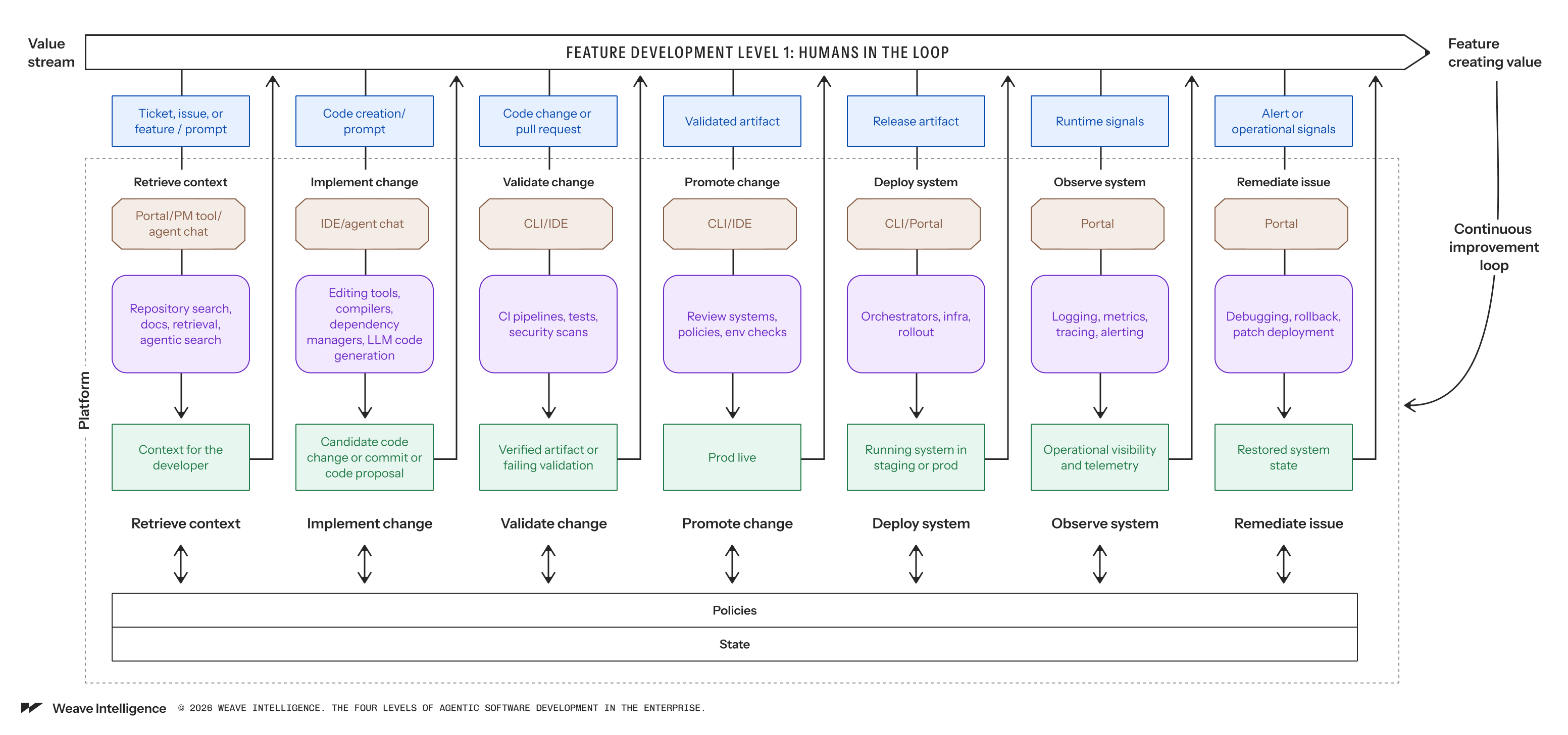

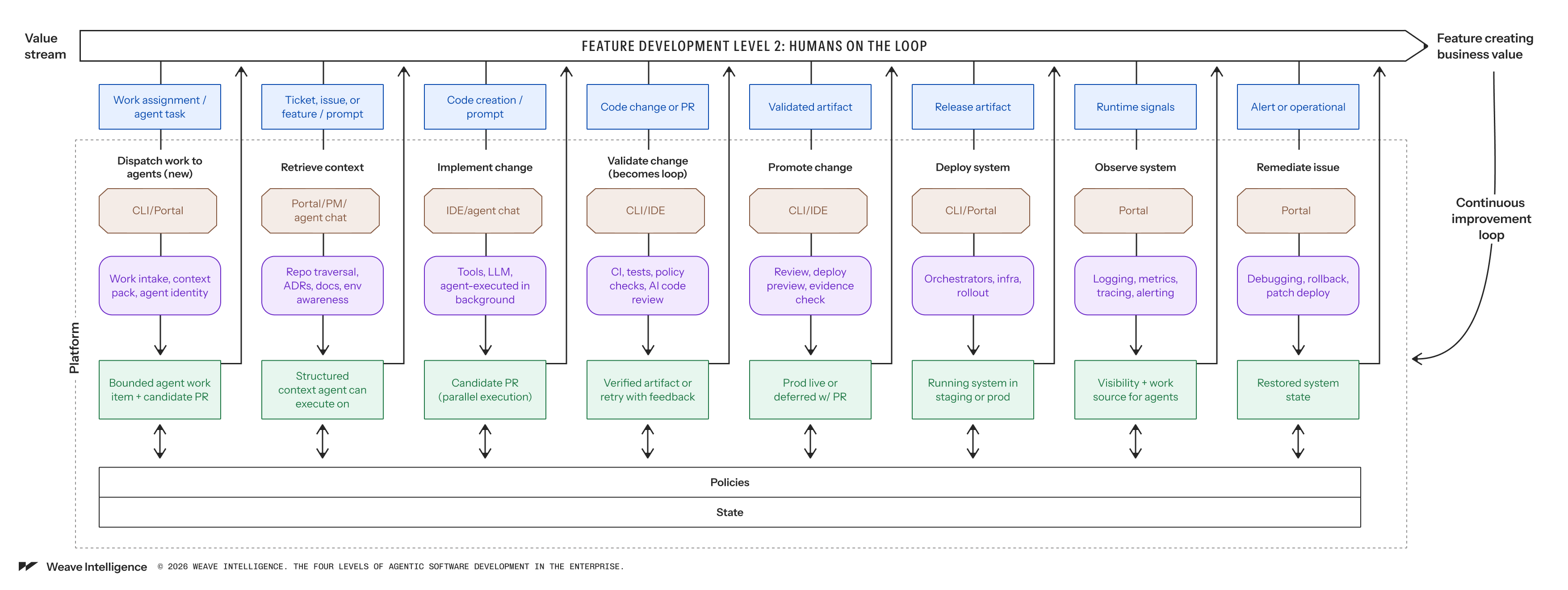

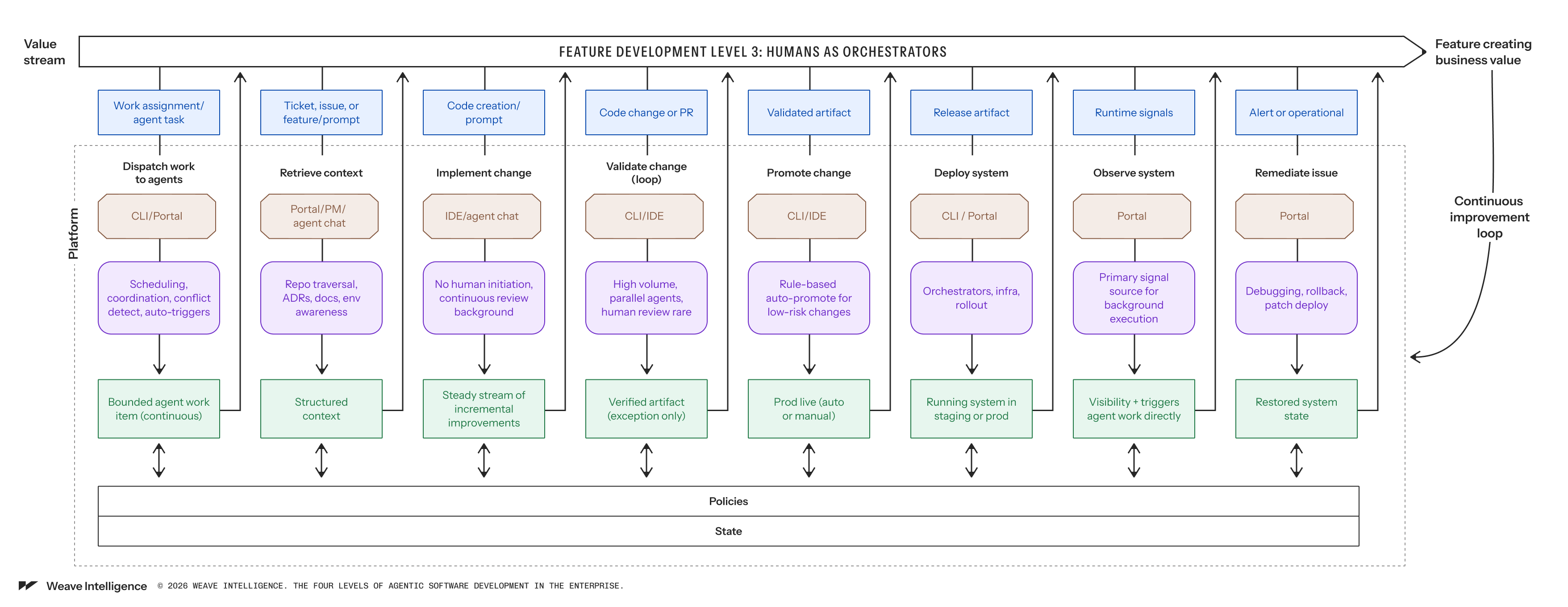

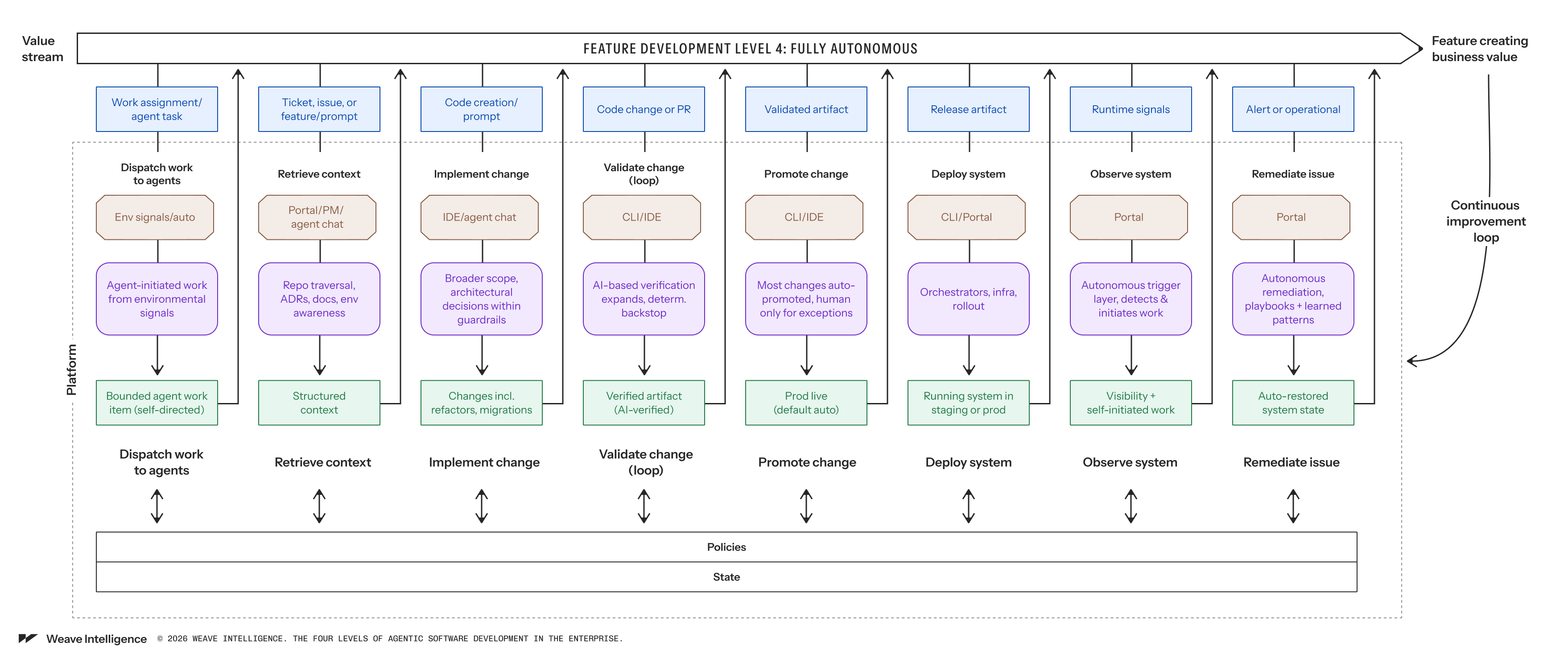

At Level 1, the human is still the execution engine. Agents assist, but humans approve everything. At Level 2, agents begin executing work in parallel, and humans verify outcomes rather than inspect every line. At Level 3, the platform runs continuously in the background, and human review becomes exception-based. At Level 4, the system initiates its own work in response to environmental signals, within guardrails set by humans.

Each level demands more from the underlying platform, but as we can see, none of them are achievable by choosing a better model; they require deliberate platform architecture decisions. Let’s walk through them one by one.

Level 1: Human in the loop

Most enterprise software teams are here today. At that stage, agents suggest, and humans approve everything. The developer truly remains the execution engine.

In practice, this looks like AI autocomplete, prompt-driven code generation, and the use of agents to understand unfamiliar parts of the system. The developer takes the output, modifies it, and decides what to include in the codebase. The platform does not need to change to support this.

The gains at this level are, of course, real, but they are limited. Output is still constrained by human review bandwidth, which means one person, one keyboard, one PR at a time.

Level 2: Humans on the loop

This is where things get genuinely different, and where most organizations underestimate the platform work required.

At Level 2, agents stop being a private tool on a workstation and become participants in the value stream. Humans dispatch agents to handle chunks of work on their behalf. This concretely means: Multiple agents work in parallel in the background. At all times, multiple PRs advance simultaneously, and the human is no longer the execution engine, but the human still decides what to advance.

This is the first real parallelization. Not more developers, but more work items moving forward at the same time because agents are doing the execution.

The platform has to change meaningfully to support this. A new golden path appears: we call it “dispatch work to agents”. The platform must now manage agent identity, provision sandboxed environments per agent session, enforce resource limits, and package context in a way that agents can actually act on. Without this infrastructure, agents collide, consume unbounded compute, and produce outputs that the system cannot safely evaluate.

The most important structural change at Level 2 concerns validation. At Level 1, validation is a gate: a human walks a PR through CI, reviews the diff, and approves it. At Level 2, that model breaks immediately. Agents generate changes at volumes and cadences that human review cannot match. And so to support that, validation has to become a loop.

The agent generates a lot of output. The platform runs automated checks: tests, security scans, and policy evaluation. If the output fails, the failure is routed back to the agent, which modifies and retries—the human reviews aggregate results, not individual diffs. Instead of reading code line by line, the human is verifying behavior: does this work the way it should?

This shift is, in reality, harder than it sounds. It requires comprehensive automated testing, security scanning integrated into the pipeline, and policy enforcement as deterministic gates rather than manual audits. If you think about it, you have to balance the slight randomness of probabilistic systems with the somewhat dumb predictability of deterministic ones. Getting this right is what makes Level 2 sustainable instead of chaotic. Organizations that skip this work and just start dispatching agents at scale hit a wall fast: CI pipelines saturate, review queues explode, and teams lose trust in the outputs.

The jump from Level 1 to Level 2 is the hardest transition in the model, and the one with the biggest payoff.

Level 3: Humans as orchestrators

At Level 3, the platform starts to run continuously in the background. Agents no longer wait for a human to send them work. The system begins generating work based on what it observes.

A failing dependency check triggers an agent to generate a fix. A security advisory lands, and agents begin assessing exposure across the codebase and generating patches. A recurring operational anomaly is automatically routed to an agent workflow. The human defines the rules, and the platform executes them.

Human review becomes exception-based. Instead of approving individual changes, humans design the policies that determine when the system may advance work on its own. Low-risk changes, dependency updates, small performance improvements, and well-understood operational fixes can be promoted automatically once validation evidence is sufficient. Humans review the exceptions and the rules, not every change.

There are already a surprising number of organizations at Level 3, or approaching it, particularly for their frontend estates. This is not a future state. It is a present reality for teams that have done the platform work.

The constraint at this level shifts from review bandwidth to architectural maturity and cost management. Token economics becomes a real concern. An organization running agents continuously in the background on thousands of changes needs to govern what that costs, not just whether it is safe.

Level 4: Fully autonomous

Level 4 is where the production system becomes partially self-adjusting. Agents monitor environmental signals, customer behavior, operational telemetry, security findings, cost anomalies, and initiate new paths autonomously within predefined guardrails. They open tickets, propose changes, remediate issues, and adapt configurations without waiting for a human to ask.

We want to be clear: very few organizations operate at this level today. We are aware of isolated examples, including a SaaS company where agents listen to customer calls, detect bugs mentioned in those calls, and automatically generate fixes. But we frame Level 4 as a projection of the trajectory we are observing, not a description of widespread current practice. There is, however, no technical reason we will not get there. In hindsight, it's obvious that automated trading systems can move markets at high speed. At the time that idea felt utterly absurd, we believe the same applies here. Given the deterministic nature of software, this is a question of inference, balance of power with guardrails, and a high frequency of recurring attempts.

What makes Level 4 structurally significant is not the autonomy itself. It is what the autonomy enables: Continuous large-scale refactoring that would never get prioritized when humans have to execute every step. Or proactive security patching at the speed of CVE publication rather than quarterly cycles. Demand-responsive infrastructure adaptation without a human interpreting a dashboard first, and definitely self-improving validation loops that update their own rules based on failure patterns.

At Level 4, platform engineers stop thinking primarily about software development as a human workflow. They think of it as an autonomous production process that continuously adapts software to the environment inside a set of deterministic guardrails they design and own. In our high-frequency trading analogy, we’re moving from traders to system builders.

Where does your organization sit?

The honest answer for most enterprise teams is somewhere between Level 1 and Level 2, with some teams starting to see the edge of Level 3.

The question to ask is not "which model are we using?" It is "What does our platform actually support?" If agents cannot reliably retrieve context, they are stuck at Level 1. If validation is still a single human-reviewed gate, you cannot scale to Level 2 without drowning your CI pipeline. If the platform has no concept of non-human identity, you cannot govern which agents are allowed to do what.

These are platform problems. And they are exactly the problems that platform engineers are best positioned to solve.

The organizations that understand this and act on it now are not just improving developer productivity. They are redesigning their production systems in a way that compounds. The gap between them and organizations still waiting to see how the AI landscape settles is growing every quarter.

If you want to figure out at which level your organization is, take the self-assessment "How ready is your platform to safely run agents?