This year's DORA report clearly showed how AI acts as an amplifier, magnifying an engineering organization's strengths but also its weaknesses. When infrastructure is fragmented, AI amplifies the chaos. But for organizations with strong underlying systems, AI can drive dramatic performance improvements. Jellyfish’s AI Engineering Trends study, which tracked 20 million Pull Requests across more than 700 companies and 200,000 engineers, found that organizations with the highest adoption maturity see 2x PR throughput compared to those in the earliest stages.

An internal developer platform (IDP) plays an essential role in providing the environment and context for AI to succeed. For software developers, IDPs reduce friction and cognitive load with golden paths that gather best practices, configuration, and tooling. Instead of starting from scratch when spinning up services or deploying to production, developers can follow the recommended route with consistent results.

In addition to helping individual developers, platform engineering offers organization-wide benefits. By baking guardrails into platforms, engineering orgs can prevent AI tools from accessing sensitive data and enforce security policies such as encryption and least-privilege access.

These are all major contributions, but engineering leaders can sometimes struggle to demonstrate exactly how and where platforms add value to their executive peers. With accurate metrics, platform engineering teams can quantify the impact of their IDP and keep pace with rapidly evolving AI capabilities.

Here's what your platform team needs to measure and why.

Time-to-market matters

Velocity metrics aren't just for product teams; they're also a strong indicator of how your platform is performing. By comparing metrics such as cycle time and lead time to change pre- and post-platform, you can gauge how much faster developers are shipping features since implementation. That connection between platform engineering and velocity is proof that the benefits extend all the way to the end user. Faster shipping means more regular access to new features and increased innovation.

Tracking how speed changes over time also allows you to make informed platform improvements. If key metrics plateau, you'll want to dig deeper and find out what developers are missing in order to be more productive.

AI adoption and impact

Above all else, business leaders want to understand the impact of their AI investments. If you can show that your platform enables developers to work better with AI, that's a huge win.

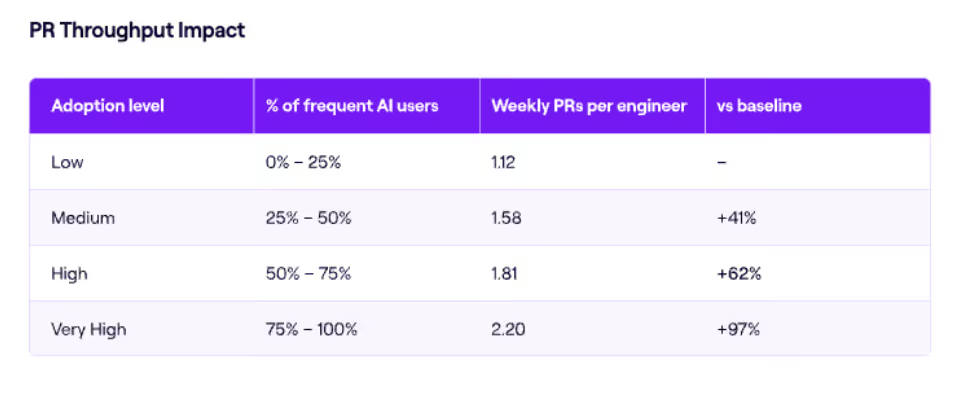

Jellyfish's AI Engineering Trends study found that organizations with medium adoption maturity saw 1.58X PR throughput, while those with high and very high maturity saw increases of 1.8X and 2.2X respectively.

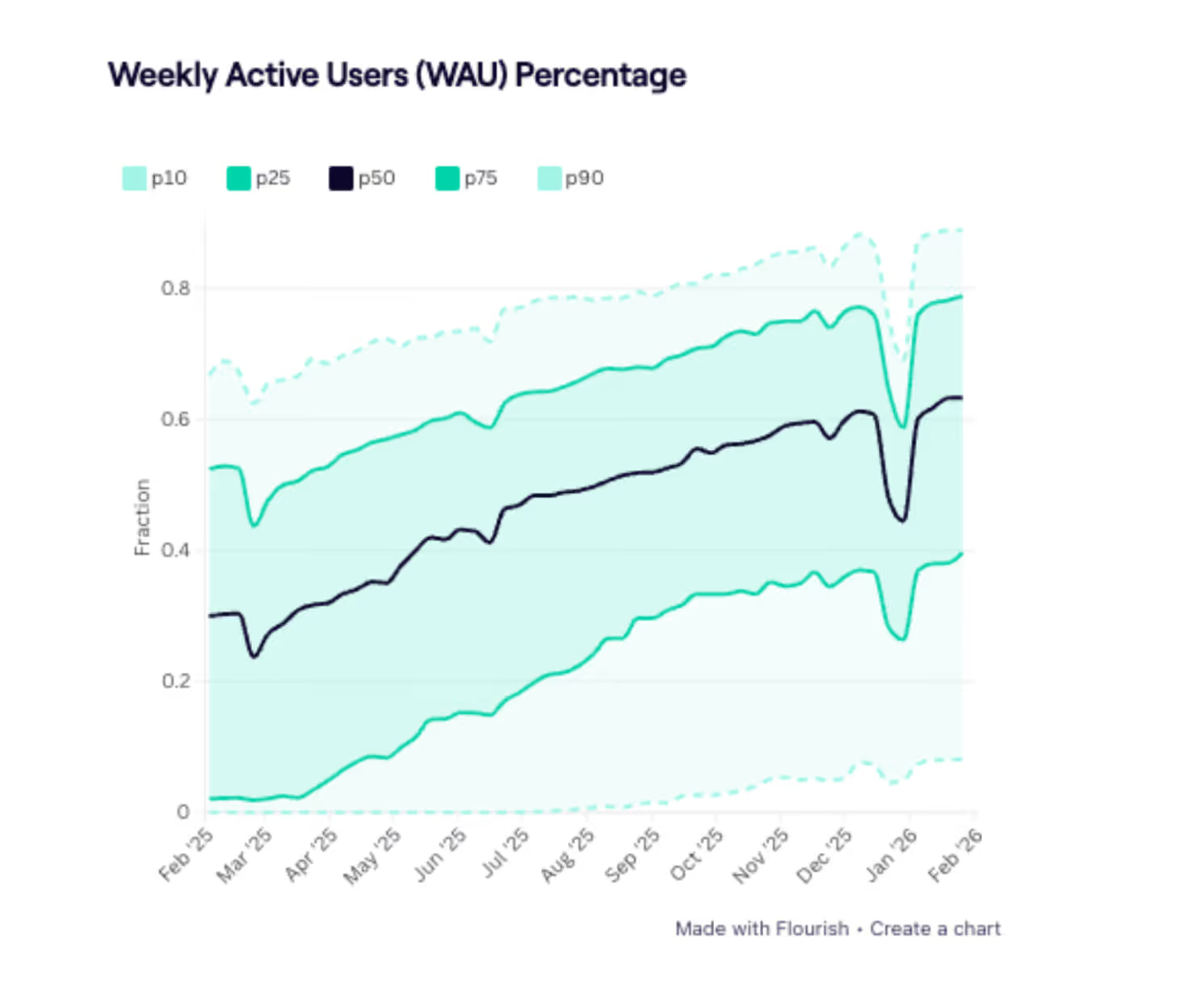

A quality platform increases AI adoption by making it easier to select the right tools and decide which coding suggestions to accept. Visibility into how AI use is evolving across teams and tools provides evidence that your platform is helping expand adoption. You can also benchmark adoption against broader industry trends. Data around Weekly Active Users (WAU), frequent AI users, and AI code percentage will help you understand – and demonstrate – where your platform is providing a competitive advantage.

AI tools improve productivity, but they can also introduce bugs or compatibility issues. Jellyfish research shows a slight uptick in reverted PRs – code that was deployed but needed to be rolled back – at higher AI adoption tiers. By tracking PR revert rate and other code quality metrics, you can check you have the right guardrails in place and confirm that your IDP is contributing to better engineering outcomes.

As AI vendors experiment with pricing structures, business leaders will be eager to see how your platform optimizes AI costs. When developers follow golden paths, they're less likely to waste AI tokens on repeated work or trial and error that doesn't yield results. Managers can keep track of AI use within their teams, identify power users, and ensure everyone is getting an appropriate level of training and support.

Monitoring AI impact isn't just about proving platform health; it also helps you keep up with rapidly evolving tools and technologies. Insights into where your platform removes friction and where developers are getting stuck are extremely useful when it comes to refining your product.

The developer experience

Platform engineering teams build a product (an IDP) for their customers (developers). Just like product teams build features with end users in mind, platform teams aim to develop features that improve the developer experience. For platform engineering teams, that means streamlining the entire software development process by reducing infrastructure complexity and standardizing workflows. There are a few ways to know whether your platform is having the desired effect:

Demonstrate improvements in productivity

IDPs centralize services, documentation, and tooling so that developers don't have to waste time searching for information or seeking approvals. With tribal knowledge encoded into best practices, IDPs also protect senior engineers from constant distractions. Everyone can focus on their own work and high-value tasks, which means happier, more productive teams.

You can quantify those improvements using DORA metrics to compare deployment frequency, lead time, and change failure rates with and without the platform or after making changes. Benchmarking tools that let you compare engineering teams and the wider org against industry peers also reveal where platform investments are making a real difference.

Understand developer sentiment

To achieve a holistic understanding of the developer experience, it's important to complement system signals with human feedback. One effective way to do this is to create DevEx surveys that ask developers specifically about their experience using the platform.

Questions might cover platform usage and satisfaction, self-service capabilities, and tooling and integration. You can also offer opportunities for open-ended feedback, for example, "What would you change or add to the platform to improve your experience?" or "How could the platform support your daily workflow?" Comparing survey results to performance data allows platform engineering teams to prioritize issues that are having the biggest impact on productivity.

Platform engineering makes a difference, but business leaders won't just take your word for it. You need metrics and an accurate understanding of developer sentiment to show how and where your platform adds value.

To succeed with more autonomous AI agents, engineering organizations need the structure, guardrails, and visibility that only a quality internal developer platform and skilled platform engineering team can provide.

Ready to drive measurable impact in the AI era? Learn more about Jellyfish and request a demo here