I spend most of my time talking to platform engineering teams. Here’s what they almost never say out loud, but show me every single time.

In the last six months, I’ve sat across from platform engineering teams at financial institutions, global tech companies, and cloud-native enterprises. Different industries, stacks, and org structures. But the same conversation, almost word for word.

Someone pulls up a spreadsheet. Or a Notion doc. Or (no joke) a shared Google Sheet maintained by one engineer who has been at the company since the beginning. And they explain, apologetically, that this is how they track what’s actually deployed versus what their IaC says should be deployed.

That spreadsheet is the operational gap made visible.

The gap between what you declared in code and what actually exists in your cloud. The gap between knowing a resource is misconfigured and having a governed path to fix it. The gap between your audit log and the truth. Most organizations manage this gap through institutional memory, glue scripts, and the heroics of a handful of engineers who know where the bodies are buried.

That worked when the pace of change was slow. It does not work anymore.

The split-brain problem no one talks about

Platform engineering has always been divided between two modes of operation.

Day 1: stand infrastructure up safely, with the right policies, through approved workflows.

Day 2: keep it that way: catch drift, manage cost, maintain compliance, understand what’s actually running versus what was intended.

These two modes live in separate tooling. They always have. And for a long time, the gap between them was manageable because the rate of change in most cloud environments was slow enough for humans to bridge it manually.

That calculus has changed.

LLMs have accelerated the SDLC by an order of magnitude. Infrastructure that used to take days to provision takes minutes. The volume of changes moving through a production environment at a mid-to-large enterprise has grown faster than any manual review process can absorb.

The gap is the same gap it always was, but the consequences of leaving it unmanaged are compounding.

What I see in the field: teams with strong IaC practices and zero confidence in what percentage of their cloud is actually governed by that IaC. Drift they can detect, but cannot remediate safely. Compliance postures that depend on someone remembering to run a script and file a ticket. Governance as ceremony, not infrastructure.

What visibility alone cannot do

The teams I talk to are not short on data. Most have invested heavily in observability, CSPM tools, and asset inventories. They can tell you what exists. They can surface findings. They generate reports.

What they cannot do is close the loop.

Knowing a resource exists does not mean it is governed. Knowing something is misconfigured does not mean you have a controlled, auditable path to fix it. Discovery without remediation is just a longer to-do list.

The other side of this problem is equally real. Strong IaC governance at the point of delivery does not tell you what is happening in the portion of your cloud that was never codified. It does not tell you about the environment spun up manually last quarter, or the resource that drifted after a late-night incident response.

You need both:

- a complete picture of what exists

- the authority to act on it within governed workflows

Without both, you are running cloud operations on partial information and hoping the parts you cannot see do not cause a problem.

Autonomous operations require legible infrastructure

The mature response to the Operational Gap is not another point solution. It is a shift toward autonomous cloud operations: a model where the platform continuously enforces its own standards, detects deviations, and acts on them without waiting for a human to notice.

But autonomous operations has a prerequisite that most teams have not confronted directly:

Your infrastructure must be legible to the system acting on it. Not just inventoried or tagged, but actually legible.

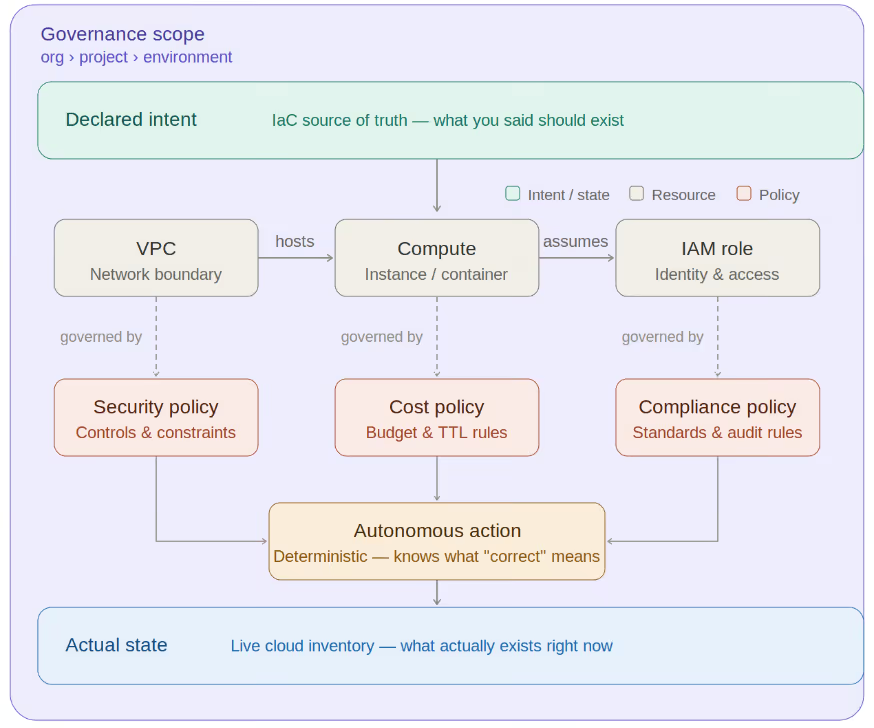

By legible, I mean expressed in a structured, machine-readable model that encodes:

- What resources exist

- How they relate to each other

- What policies govern them

- Who owns them

- What the intended state actually is

An LLM can generate Terraform. It cannot safely govern your cloud unless it understands the ontology of your infrastructure.

Infrastructure ontology is the structured representation of resources, relationships, constraints, and intent that allows automated systems to reason about what is correct for your organization. Without that structure, an autonomous system is forced to infer meaning from incomplete context. That leads to confident guessing at scale, a risk profile no platform team should accept.

Consider a simple example: a security group open to the internet. A Cloud Security Posture Management (CSPM) tool can flag the finding. An asset inventory can record its existence. But an autonomous system needs additional context:

- Is the workload internet-facing by design?

- What environment tier is it in?

- Which policy applies?

- Who owns the resource?

- What remediation path is allowed?

- What dependencies would be affected?

Without an ontological model capturing this context, automation cannot act deterministically. Defining this ontology is not a one-time exercise. It is an operational discipline. The ontology must reflect actual state, not just declared state. It must capture governance scope, relationships, and intent in a way that is queryable, auditable, and actionable.

Organizations pursuing autonomous cloud operations have two paths: build the ontology layer internally, or adopt platforms that provide it. The build path is possible. It is also the kind of foundational investment that consumes platform engineering capacity for years and still leaves teams maintaining internal tooling instead of delivering platform value.

Why governance must become infrastructure

Platform teams have historically underinvested in governance tooling for a simple reason: when governance works, nothing happens. The misconfiguration that did not cause an incident is invisible. The cost overrun prevented at deploy time never appears on a dashboard. The value exists in avoided outcomes. At scale, that equation breaks down.

When infrastructure change velocity is high, governance cannot remain a process executed by humans. It must become a continuously operating layer embedded into the platform itself: Encoded, automatic, and always present.

It should not be something engineers remember to check, but something the platform enforces by default. We explored this idea with the PlatformEngineering.org community in a session titled [Infra Governance for Rulebreakers: How to Make Guardrails Invisible](https://www.youtube.com/live/WnJE2tLF8LI?si=tVJbzF3k1BVm6x&t=105).

The core idea was simple:

Guardrails that engineers feel are guardrails they route around. Governance that works at scale disappears into the workflow, present everywhere and visible nowhere. Invisible guardrails are the first step. Autonomous guardrails are the next.

Once infrastructure is represented ontologically, with relationships, policies, and intent modeled explicitly, governance becomes something the system can reason about and enforce continuously.

Bridging intent and reality

The combination of governed workflows and normalized infrastructure visibility begins to form the ontology layer autonomous operations require: Intent on one side, actual state on the other, and a governed remediation path connecting them.

When intent and state diverge.. .and they will... the platform has the context required to act deterministically, rather than simply surface another finding for manual triage.

The operational gap did not start with AI.

…But AI has made it impossible to ignore. As LLM-assisted development compresses the time between idea and production, governance must operate at the same speed. No, spreadsheets cannot keep up, glue scripts cannot keep up, and institutional memory cannot keep up.

The teams that close this gap early will operate with a fundamentally different level of confidence than those treating it as a future problem.

From the field, the future problem is already here.